Future of LLMs and Real Time Communication

Built for Speed: ~10ms Latency, Even Under Load

Blazingly fast way to build, track and deploy your models!

- Handles 350+ RPS on just 1 vCPU — no tuning needed

- Production-ready with full enterprise support

Introduction

The intersection of large language models (LLMs) and WebRTC technology is poised to revolutionize how we interact with AI. This exploration delves into the tech stack, applications, and integration of these technologies, providing a comprehensive view of their potential for the future.

The Evolution of WebRTC

Building the Foundation

WebRTC, or Web Real-Time Communication, emerged in the 2010s as a groundbreaking technology enabling peer-to-peer communication through simple APIs. Spearheaded by Google's WebRTC team, this initiative involved substantial collaboration across industry standards bodies and companies, solving numerous complex problems over nearly a decade .

Expanding Horizons

Initially designed for person-to-person video calls, WebRTC's scope broadened significantly. A notable application was Google's Stadia, where WebRTC facilitated cloud-based gaming on iOS, transforming video calls into interactive experiences with machines running video games. This innovative use case highlighted WebRTC's potential beyond traditional communication .

The Rise of LLMs

From Curiosity to Innovation

Justin's fascination with AI dates back to his youth, spurred by philosophical inquiries into machine sentience. This curiosity evolved into a professional pursuit, leading him to explore AI's transformative capabilities. The leap from text-based models to multimodal AI, capable of understanding and generating various forms of media, marks a significant milestone in AI development .

Choosing the Right LLM

Building an effective AI system involves careful selection of LLMs. Different models offer varied strengths, from reasoning capacity to response speed. Key points include:

- Performance and Speed: GPT-4 on Azure provides a balanced trade-off between performance and speed, essential for real-time applications.

- Benchmarks and Testing: Continuous testing across models like Mistral and Grok to refine choices, aiming for sub-200 millisecond response times to meet human communication standards .

Integrating LLMs with WebRTC

The Technical Synergy

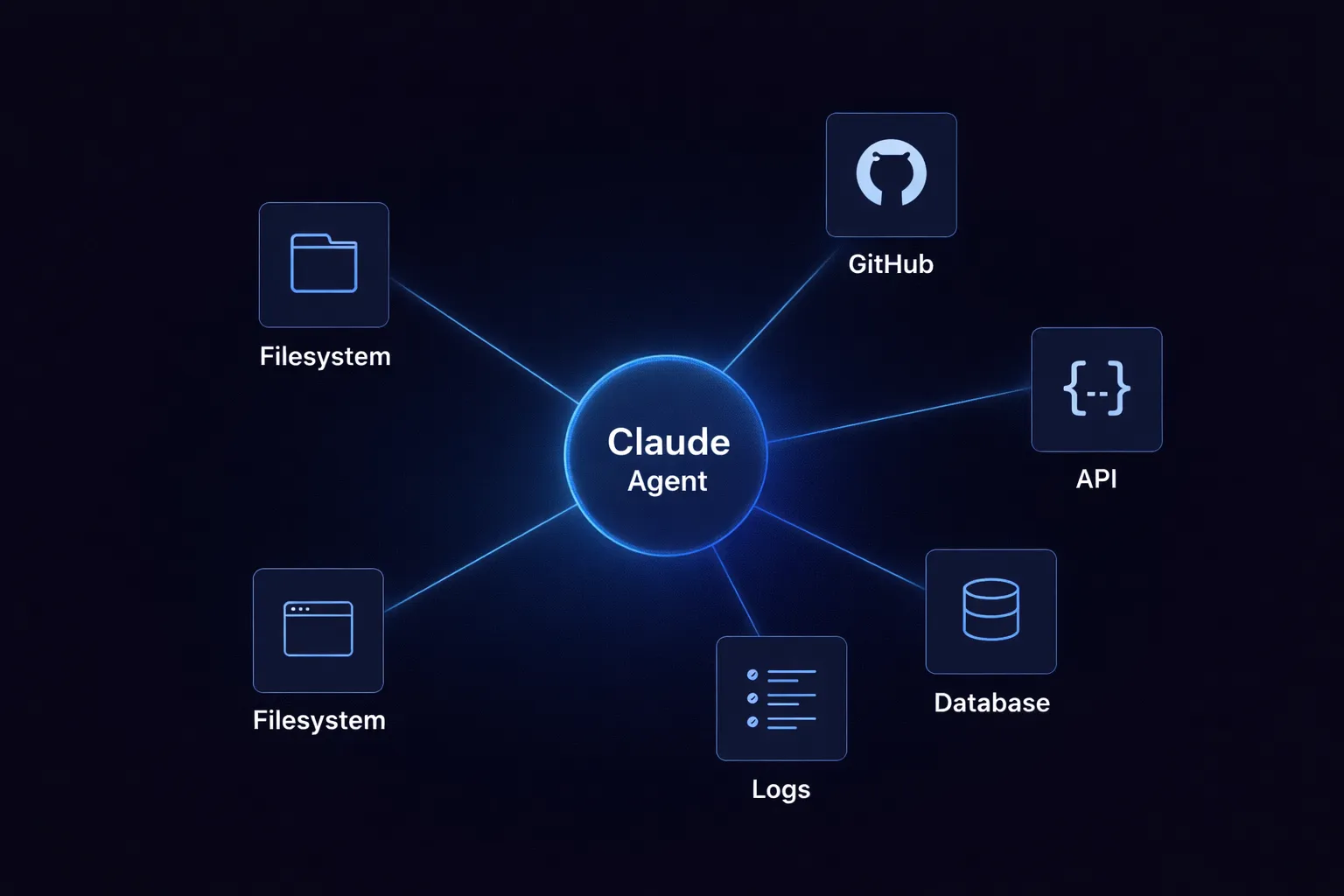

Combining LLMs with WebRTC technology opens up new realms of interaction. Key points include:

- Multimodal Applications: These applications running over WebRTC enable AI systems to perceive, understand, and communicate through voice and video.

- Enhanced Responsiveness: Leveraging WebRTC's real-time capabilities to improve the interactivity of AI models .

Practical Applications

Multimodal AI, supported by WebRTC, creates immersive user experiences. Notable applications include:

- AI-Powered Video Calls: Calls that comprehend and respond contextually.

- Interactive Gaming and Virtual Assistants: Enhancing user experience and pushing the boundaries of real-time AI scenarios .

Challenges and Solutions

Speed and Performance

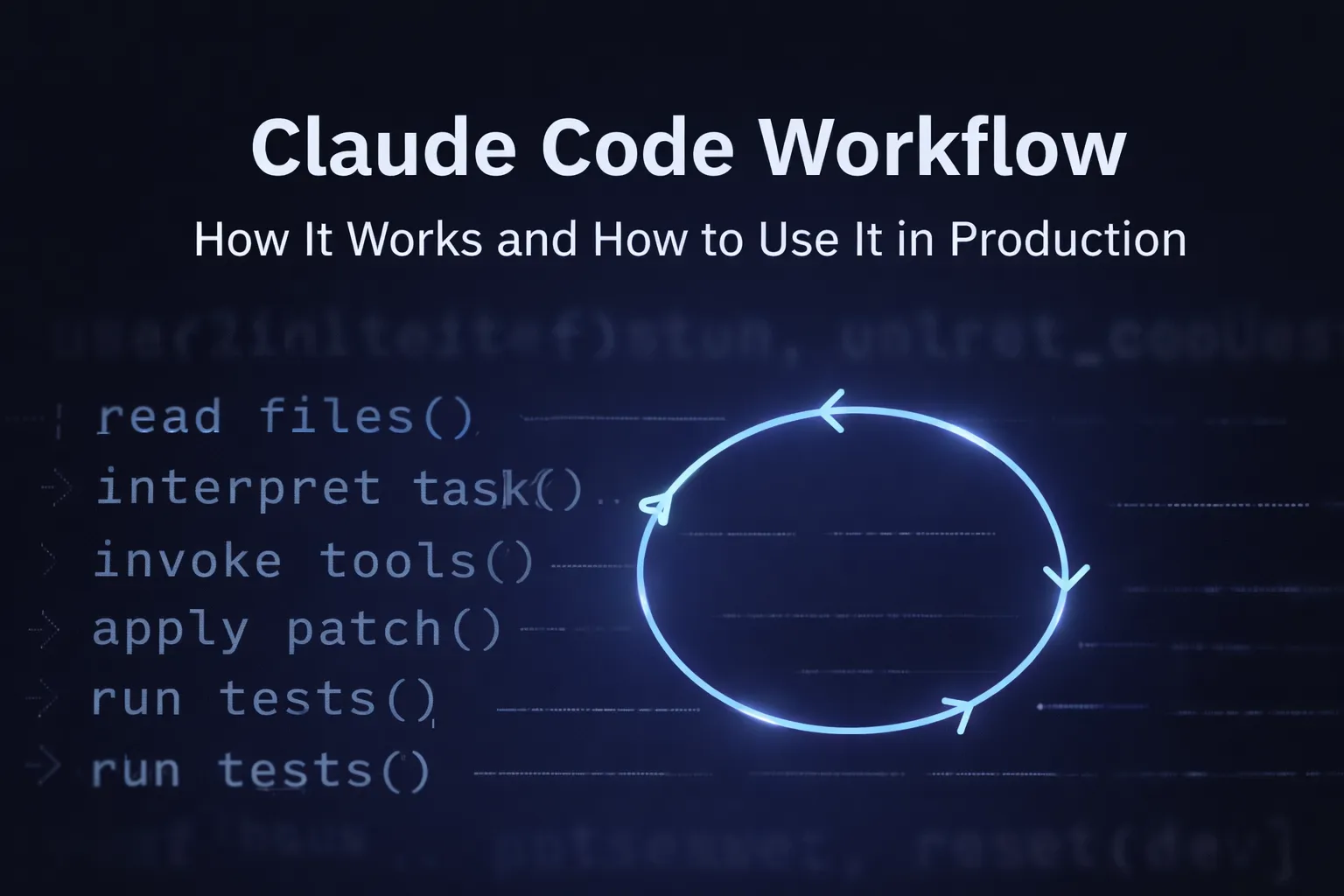

Maintaining low latency is a critical challenge. Solutions involve:

- Optimization: Each stage of the process, from automatic speech recognition (ASR) and language processing to text-to-speech conversion, requires optimization.

- Continuous Benchmarking: Advancements in model efficiency are essential to meet performance requirements .

Unified Models

Moving towards unified models can reduce latency and improve performance. Key points include:

- End-to-End Processes: Handling processes from speech input to speech output.

- Streamlined Interaction Pipeline: Eliminating multiple processing stages to enhance speed and reliability .

Future Prospects

Advancements in Multimodal AI

The future of AI lies in its ability to fully perceive and interact in multimodal environments. Prospects include:

- Bespoke Video Content: Generation in real-time.

- Advanced Reasoning Capabilities: As WebRTC evolves, its integration with sophisticated LLMs will pave the way for unprecedented AI experiences .

Broader Implications

The technological convergence extends beyond entertainment and communication. Potential impacts include:

- Healthcare, Education, and Customer Service: AI systems that understand and respond in real-time can provide personalized and efficient interactions .

Conclusion

The integration of LLMs and WebRTC represents a significant stride towards a future where AI seamlessly blends into our daily lives. By leveraging the real-time communication prowess of WebRTC and the advanced cognitive abilities of LLMs, we can create interactive, responsive, and intelligent systems that redefine our interaction with technology. As these technologies advance, their combined potential will undoubtedly unlock new dimensions of innovation and utility.

TrueFoundry AI Gateway delivers ~3–4 ms latency, handles 350+ RPS on 1 vCPU, scales horizontally with ease, and is production-ready, while LiteLLM suffers from high latency, struggles beyond moderate RPS, lacks built-in scaling, and is best for light or prototype workloads.

.webp)

.webp)

.webp)

.webp)

%20(1).webp)