Key Features

Unified API for 1000+ LLMs

Multimodal & Audio APIs

Native SDK Compatibility

Load Balancing & Fallbacks

Semantic Caching

Batch APIs

Access Control & API Keys

Rate Limiting

Budgets & Cost Tracking

Guardrails

Observability & Logs

Prompt Management

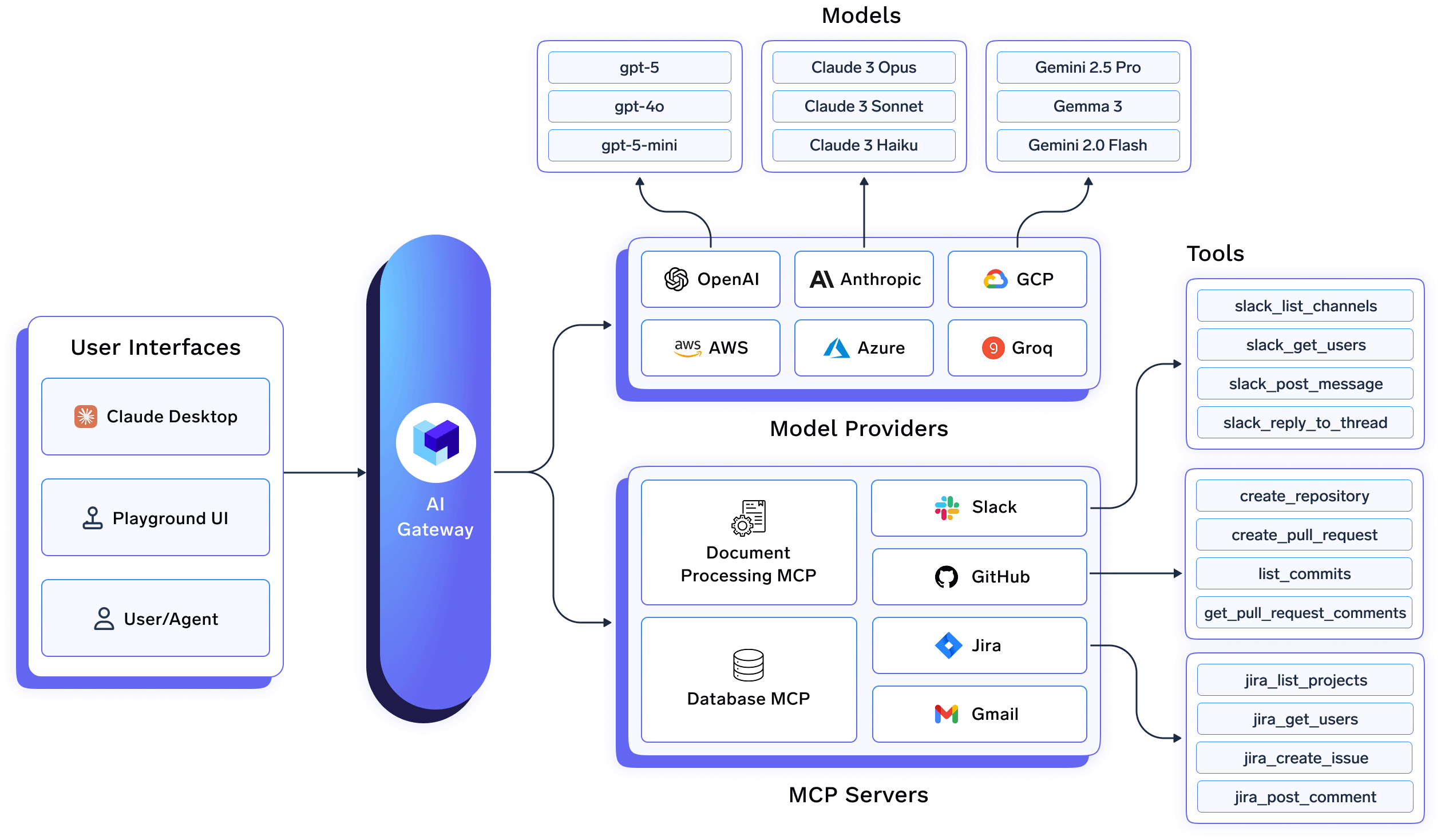

MCP Registry

Centralized MCP Auth

Virtual MCP Servers

Agent Registry

Skills Registry

SKILL.md instructions for agents and IDEs.Flexible Deployment

Supported Model Providers

We integrate with 1000+ LLMs through the following providers.

Supported APIs

The following accordions summarize provider support for each gateway endpoint. Each section links to the full guide for that API (same order as Supported APIs in the sidebar).- ✅ Supported by Provider and Truefoundry

- Provided by provider, but not by Truefoundry

- Provider does not support this feature

Chat Completion (/chat/completions)

Chat Completion (/chat/completions)

| Provider | Stream | Non Stream | Tools | JSON Mode | Schema Mode | Prompt Caching | Reasoning | Structured Output |

|---|---|---|---|---|---|---|---|---|

| OpenAI | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | |

| Azure OpenAI | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | |

| Anthropic | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ||

| Bedrock | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ||

| Vertex | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ||

| Cohere | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ||

| Gemini | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | |

| Groq | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | |

| AI21 | ✅ | ✅ | ✅ | |||||

| Cerebras | ✅ | ✅ | ✅ | ✅ | ||||

| SambaNova | ✅ | ✅ | ✅ | ✅ | ||||

| Perplexity-AI | ✅ | ✅ | ✅ | ✅ | ✅ | |||

| Together-AI | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | |

| xAI | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

| DeepInfra | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

Embedding (/embeddings)

Embedding (/embeddings)

| Provider | String | List of String |

|---|---|---|

| OpenAI | ✅ | ✅ |

| Azure OpenAI | ✅ | ✅ |

| Anthropic | ||

| Bedrock | ✅ | ✅ |

| Vertex | ✅ | ✅ |

| Cohere | ✅ | ✅ |

| Gemini | ||

| Groq | ||

| SambaNova | ||

| Together-AI | ✅ | ✅ |

| xAI | ||

| DeepInfra |

Batch (/batches)

Batch (/batches)

| Provider | Batch |

|---|---|

| OpenAI | ✅ |

| Azure OpenAI | ✅ |

| Anthropic | |

| Bedrock | ✅ |

| Vertex | ✅ |

| Cohere | |

| Gemini | |

| Groq | |

| Cerebras | |

| Together-AI | |

| xAI | |

| DeepInfra |

Fine Tune

Fine Tune

| Provider | Fine Tune |

|---|---|

| OpenAI | ✅ |

| Azure OpenAI | |

| Anthropic | |

| Bedrock | |

| Vertex | ✅ |

| Cohere | |

| Gemini | |

| Groq | |

| Cerebras | |

| Together-AI | |

| xAI | |

| DeepInfra |

Model Response (/responses)

Model Response (/responses)

| Provider | Model Response |

|---|---|

| OpenAI | ✅ |

| Azure OpenAI | ✅ |

| Anthropic | |

| Bedrock | |

| Vertex | |

| Cohere | |

| Gemini | |

| Groq | |

| Cerebras | |

| Together-AI | |

| xAI | |

| DeepInfra |

Image Generation (/images/generations)

Image Generation (/images/generations)

| Provider | Generate |

|---|---|

| OpenAI | ✅ |

| Azure OpenAI | ✅ |

| Bedrock | ✅ |

| Vertex | ✅ |

| Anthropic | |

| Cohere | |

| Gemini | |

| Groq | |

| Together-AI | |

| xAI | |

| DeepInfra |

Image Edit (/images/edits)

Image Edit (/images/edits)

| Provider | Edit |

|---|---|

| OpenAI | ✅ |

| Azure OpenAI | ✅ |

| Bedrock | ✅ |

| Vertex | ✅ |

| Anthropic | |

| Cohere | |

| Gemini | |

| Groq | |

| Together-AI | |

| xAI | |

| DeepInfra |

Image Variation (/images/variations)

Image Variation (/images/variations)

| Provider | Variation |

|---|---|

| OpenAI | ✅ |

| Azure OpenAI | |

| Bedrock | ✅ |

| Vertex | |

| Anthropic | |

| Cohere | |

| Gemini | |

| Groq | |

| Together-AI | |

| xAI | |

| DeepInfra |

Text To Speech

Text To Speech

| Provider | Text To Speech |

|---|---|

| OpenAI | ✅ |

| Azure OpenAI | ✅ |

| Azure AI Foundry | ✅ |

| Anthropic | |

| Bedrock | |

| Vertex | ✅ |

| Cohere | |

| Gemini | ✅ |

| Groq | ✅ |

| Together-AI | |

| xAI | |

| DeepInfra | |

| DeepGram | ✅ |

| Cartesia | ✅ |

| ElevenLabs | ✅ |

| Resemble AI | ✅ |

| Smallest AI | ✅ |

Audio Translation

Audio Translation

| Provider | Translation |

|---|---|

| OpenAI | ✅ |

| Azure OpenAI | ✅ |

| Azure AI Foundry | ✅ |

| Anthropic | |

| Bedrock | |

| Vertex | |

| Cohere | |

| Gemini | |

| Groq | ✅ |

| Together-AI | |

| xAI | |

| DeepInfra |

Speech to Text

Speech to Text

| Provider | Transcription |

|---|---|

| OpenAI | ✅ |

| Azure OpenAI | ✅ |

| Azure AI Foundry | ✅ |

| Anthropic | |

| Bedrock | |

| Vertex | |

| Cohere | |

| Gemini | |

| Groq | ✅ |

| Together-AI | |

| xAI | |

| DeepInfra | |

| DeepGram | ✅ |

| Cartesia | ✅ |

| ElevenLabs | ✅ |

| Smallest AI | ✅ |

Live / Realtime API

Live / Realtime API

| Provider | Live / Realtime API |

|---|---|

| Gemini | ✅ |

| Vertex | ✅ |

| OpenAI | ✅ |

| Azure AI Foundry | ✅ |

Files (/files)

Files (/files)

| Provider | Files |

|---|---|

| OpenAI | ✅ |

| Azure OpenAI | |

| Anthropic | ✅ |

| Bedrock | ✅ |

| Vertex | ✅ |

| Cohere | |

| Gemini | |

| Groq | ✅ |

| Cerebras | |

| Together-AI | |

| xAI | |

| DeepInfra |

Rerank (/rerank)

Rerank (/rerank)

| Provider | Rerank |

|---|---|

| OpenAI | |

| Azure OpenAI | |

| Anthropic | |

| Bedrock | ✅ |

| Vertex | |

| Cohere | ✅ |

| Gemini | |

| Groq | |

| Together-AI | |

| xAI | |

| DeepInfra |

Moderation (/moderations)

Moderation (/moderations)

| Provider | Moderation |

|---|---|

| OpenAI | ✅ |

| Azure OpenAI | |

| Anthropic | |

| Bedrock | |

| Vertex | |

| Cohere | |

| Gemini | |

| Groq | |

| Cerebras | |

| Together-AI | |

| xAI | |

| DeepInfra |

Compaction API

Compaction API

| Provider | Compaction API |

|---|---|

| OpenAI | ✅ |

Messages API

Messages API

| Provider | Messages API |

|---|---|

| Anthropic | ✅ |

Proxy API (/proxy)

Proxy API (/proxy)

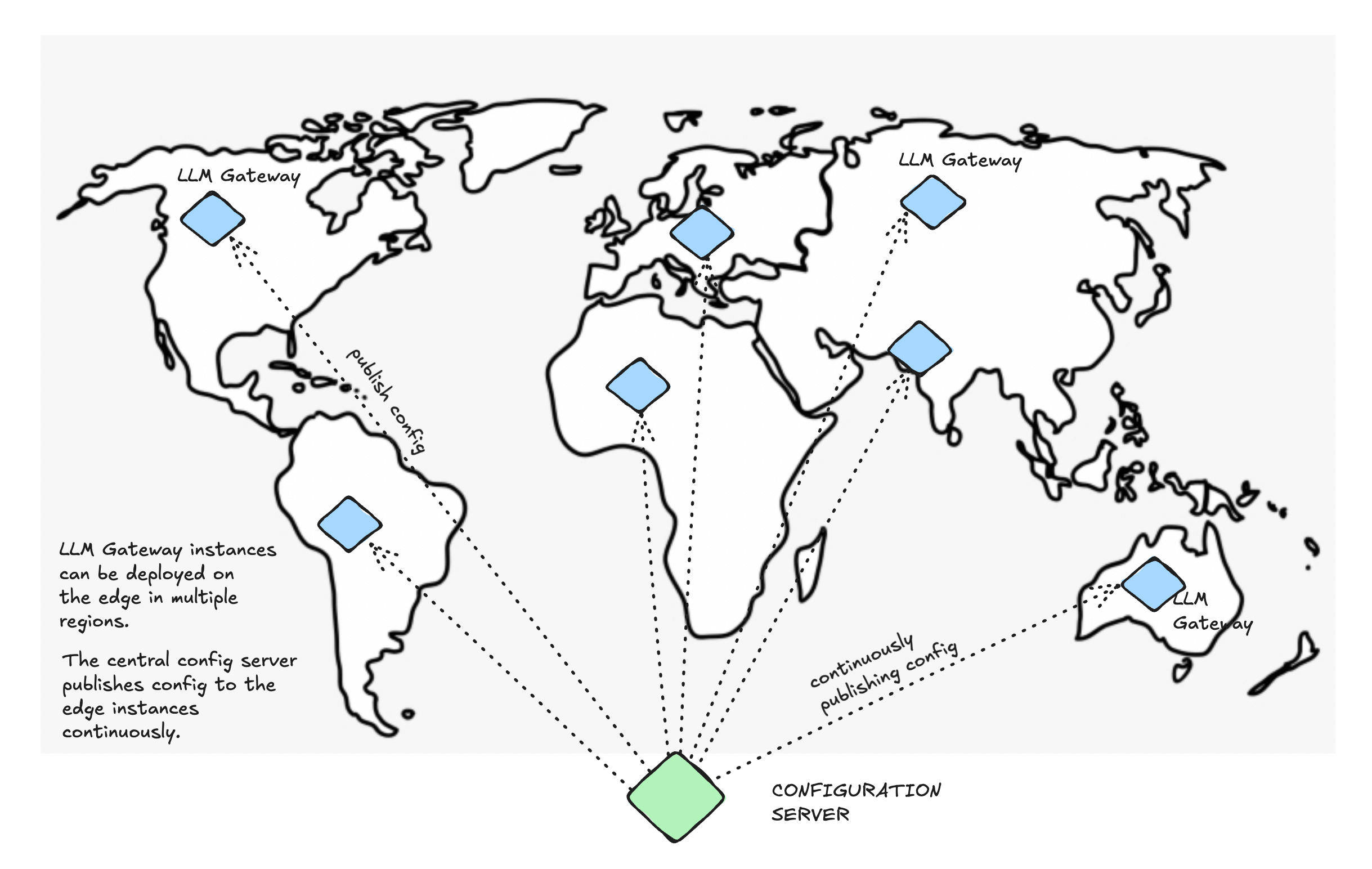

Deployment Options

You can run the AI Gateway as fully managed SaaS, keep LLM request–response data in your own object storage while Truefoundry operates the gateway, or host the gateway plane (and optionally more of the stack) in your cloud or on-prem for stricter data residency and control. Each option differs in who hosts infrastructure, where traffic flows, and pricing tier. Read the full comparison—including a scenario table, diagrams, and operational notes—in AI Gateway deployment options. For background on how the gateway fits the platform, see gateway plane architecture. To start on managed SaaS, follow the quick start.Frequently Asked Questions

What's the performance impact of using the gateway?

What's the performance impact of using the gateway?

is closer to your users.

Can I deploy the gateway on-premise?

Can I deploy the gateway on-premise?

How do I integrate my self-hosted models?

How do I integrate my self-hosted models?

Can I use the gateway without the full MLOps platform?

Can I use the gateway without the full MLOps platform?