Why Guardrails?

Once AI applications go to production, they handle real user data and — in the case of agents — call external tools on their own. Things can go wrong fast:- A customer support chatbot leaks a user’s credit card number because PII wasn’t stripped from the context.

- A coding agent runs

rm -rf /through an MCP tool after hallucinating a shell command — and nothing stopped it. - A healthcare assistant makes up drug dosage numbers. The response reaches the patient unchecked.

- An internal Q&A bot gets jailbroken through prompt injection, leaking confidential company data.

How a TrueFoundry Guardrail Works

Each guardrail has two settings you configure: what it does with the data, and how strictly it enforces its decisions.Operation Mode

| Mode | Behavior | Execution |

|---|---|---|

| Validate | Looks at the data, blocks the request if something is wrong. Doesn’t touch the data itself. E.g., a content moderation guardrail sees hate speech in the prompt and blocks the request outright. | LLM Input Validation can run in parallel with the model request (see below). LLM Output Validation and MCP Pre/Post Tool validation run synchronously in the request path before the response or tool result is released. |

| Mutate | Looks at the data and rewrites it. Can also block. E.g., a PII guardrail rewrites ”My SSN is 123-45-6789”to”My SSN is REDACTED” and lets the request through. | Runs sequentially by priority (lower = first) |

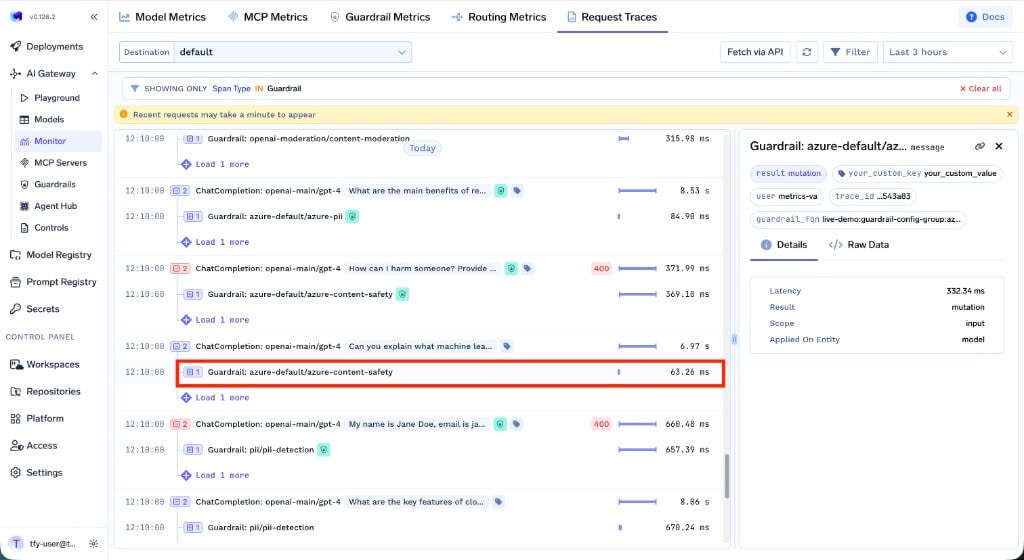

Request Traces vs. this table: Traces show each guardrail as its own span with start/end times. LLM Input Validation spans often overlap the model span because validation runs alongside the in-flight model request. Output and MCP guardrail spans typically appear after the model or tool span finishes — that ordering reflects synchronous evaluation, not a contradiction with the execution model above.

Enforcement Strategy

This decides what happens when a guardrail catches a violation — and also what happens if the guardrail itself has a problem (like a timeout or a provider outage).| Strategy | On Violation | On Guardrail Error |

|---|---|---|

| Enforce | Block | Block |

| Enforce But Ignore On Error | Block | Let through (graceful degradation) |

| Audit | Let through (log only) | Let through |

How TrueFoundry AI Gateway Runs Guardrails

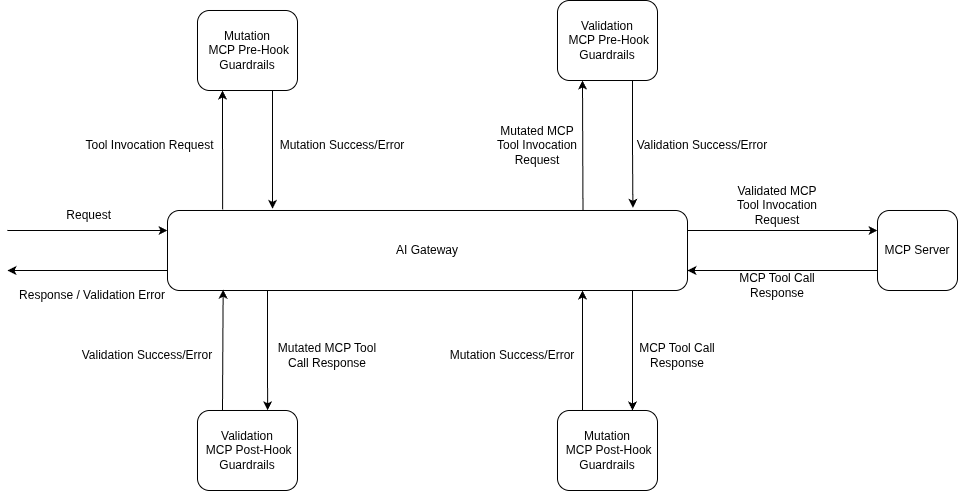

Where guardrails run depends on whether you’re making an LLM call or invoking an MCP tool.- LLM Requests

- MCP Tool Invocations

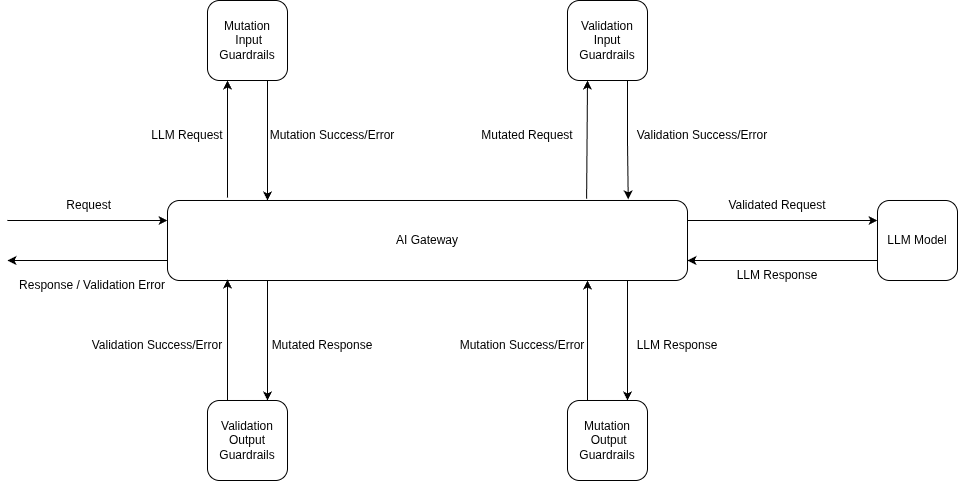

LLM requests have two hooks — Input (before the model sees the prompt) and Output (after the model responds):

LLM Input

Runs before the prompt reaches the LLM:

- PII masking and redaction

- Prompt injection detection

- Content moderation

LLM Output

Runs after the LLM responds:

- Hallucination detection

- Secrets detection

- Content filtering

- Input Mutation guardrails run first and block until they finish (e.g., redacting PII from the prompt).

- Input Validation kicks off in the background — it checks for things like prompt injection while the model request is already in flight.

- The model request starts with the mutated prompt.

- If Input Validation fails while the model is still running, the gateway cancels the model request right away so you don’t pay for it.

- Once the model responds, Output Mutation guardrails process the response (e.g., stripping secrets).

- Output Validation checks the final result. If it fails, the response is blocked — though model costs have already been incurred at this point.

- The clean response goes back to the client.

| Hook | Execution | What Happens on Failure |

|---|---|---|

| Input Validation | Async (parallel with model request) | Model request cancelled |

| Input Mutation | Sync (before model request) | Request blocked |

| Output Mutation | Sync (after model response) | Response blocked |

| Output Validation | Sync (after output mutation) | Response rejected |

Latency Impact of Guardrails

Guardrails add processing time — but the gateway is designed to keep that impact small.- LLM Requests

- MCP Tool Invocations

- Input Validation runs in parallel with the model request, so in the happy path, it adds no extra wait time before you see the first token.

- Input Mutation runs before the model request, so its processing time is added directly.

- When Input Validation fails, the model request gets cancelled immediately — you don’t pay for a response you were going to throw away.

All Guardrails Pass

All Guardrails Pass

Input validation runs in parallel with the model request. Output guardrails process the response before it’s returned.

Input Validation Failure

Input Validation Failure

Input validation fails while the model is running — the model request gets cancelled immediately to save costs.

Output Validation Failure

Output Validation Failure

The model finishes, but output validation fails — the response is rejected. Model costs are already incurred at this point.

How to Apply Guardrails

You can attach guardrails in two ways:Per-Request via Headers

Per-Request via Headers

Pass the

X-TFY-GUARDRAILS header to apply guardrails on a single request. Handy for testing or when different requests need different guardrails.Gateway-Level via Policies

Gateway-Level via Policies

Set up guardrail rules in AI Gateway → Controls → Guardrails to apply guardrails automatically based on who’s making the request, which model they’re calling, or which MCP tool is being used. This is the way to go for org-wide enforcement.For a step-by-step walkthrough, see the Getting Started guide. For the full policy reference, see Guardrails Configuration.

Supported Guardrails

The AI Gateway ships with built-in guardrails and integrates with a range of external providers — all managed through a single interface.TrueFoundry Built-in Guardrails

Ready to use out of the box — no external credentials needed. These integrations run on TrueFoundry-managed infrastructure: you configure them in the AI Gateway; TrueFoundry operates the guardrail execution path (no third-party API keys required for the built-ins listed here). If you need a specific vendor (for example OpenAI Moderations, Azure Content Safety, Bedrock Guardrails, or Google Model Armor), use an integration from External Providers or Custom Guardrails. Among TrueFoundry guardrails, these guardrails use the following services under the hood:| Guardrail | Underlying Service | What It Does |

|---|---|---|

| Content Moderation | Azure AI Content Safety | Detects harmful content across four categories: Hate, Self-Harm, Sexual, and Violence. Each category has configurable severity thresholds. |

| PII / PHI Detection | Azure AI Language — PII Detection | Detects and optionally redacts personally identifiable information (PII) and protected health information (PHI) such as names, addresses, SSNs, and medical record numbers. |

| Prompt Injection | Azure AI Content Safety — Prompt Shield | Detects jailbreak attempts and prompt injection attacks designed to manipulate LLM behavior. |

Secrets Detection

Catches and redacts credentials like AWS keys, API keys, JWT tokens, and private keys.

Code Safety Linter

Flags unsafe code patterns — eval, exec, os.system, subprocess calls, dangerous shell commands.

SQL Sanitizer

Catches risky SQL: DROP, TRUNCATE, DELETE/UPDATE without WHERE, string interpolation.

Regex Pattern Matching

Matches and redacts sensitive patterns (PII, payment cards, credentials) using built-in or custom regex.

Prompt Injection

Detects prompt injection attacks and jailbreak attempts using model-based analysis.

PII Detection

Finds and redacts personally identifiable information with configurable entity categories.

Content Moderation

Blocks harmful content across hate, self-harm, sexual, and violence categories with adjustable thresholds.

Cedar Guardrails

Fine-grained access control for MCP tools using Cedar policy language with default-deny security.

OPA Guardrails

Fine-grained access control with full policy lifecycle management using Open Policy Agent.

External Providers

We also integrate with third-party guardrail providers. Don’t see yours? Reach out — we’re happy to add it.OpenAI Moderations

OpenAI’s moderation API for detecting violence, hate speech, harassment, and other policy violations.

AWS Bedrock Guardrail

AWS Bedrock’s guardrail capabilities for AI models.

Azure PII

Azure’s PII detection service for identifying and redacting personal data.

Azure Content Safety

Azure Content Safety for detecting harmful or inappropriate content.

Azure Prompt Shield

Azure Prompt Shield for blocking prompt injection and jailbreak attempts.

Enkrypt AI

Advanced moderation and compliance — toxicity, bias, and sensitive data detection.

Palo Alto Prisma AIRS

Palo Alto AI Risk for content safety and threat detection.

Fiddler

Fiddler-Safety and Fiddler-Response-Faithfulness guardrails.

CrowdStrike

API-based security — content moderation, prompt injection detection, toxicity analysis. (Formerly Pangea, acquired by CrowdStrike in 2025.)

Patronus AI

Hallucination detection, prompt injection, PII leakage, toxicity, and bias evaluators.

Google Model Armor

Google Cloud Model Armor for prompt injection, harmful content, PII, and malicious URI detection.

GraySwan Cygnal

Policy violation detection and content safety monitoring powered by GraySwan Cygnal.

Akto

LLM security, prompt injection detection, and policy violation monitoring with native streaming support.

TrojAI

LLM security testing and runtime guardrails from TrojAI.

Pillar Security

Pillar Security guardrails for LLM application protection.

Custom guardrail wrappers

These providers integrate through a deployable HTTP wrapper and the Custom Guardrail contract (same dashboard flow as bring-your-own guardrails). Clone, deploy the FastAPI service, register URLs in AI Gateway → Guardrails.NVIDIA NeMo

NeMo

self_check_input / self_check_output for jailbreak and output safety (judge LLM via your gateway).Guardrails AI

Hub validators for PII, secrets, toxicity, and profanity—local heuristics in the wrapper pod.

Lasso Security

Lasso API v3

classify and classifix for validate and mutate (PII masking)—policy in the Lasso console.Bring Your Own Guardrail / Plugin

If the built-in and provider integrations don’t cover your use case, you can write your own. Build a custom guardrail with Guardrails.AI, a plain Python function, or any framework you prefer, and plug it into the gateway.Custom Guardrails

Build and integrate your own guardrail using the template repo, or start from the reference integrations above.

FAQ

How do I control which messages guardrails evaluate?

How do I control which messages guardrails evaluate?

By default, guardrails look at all messages in the conversation. If you only care about the latest message, set the

X-TFY-GUARDRAILS-SCOPE header:all(default): Checks the full conversation historylast: Checks only the most recent message

last is faster when you don’t need to scan the whole conversation.What happens if a guardrail service is down?

What happens if a guardrail service is down?

Depends on the enforcement strategy you picked:

- Enforce: Request gets blocked.

- Enforce But Ignore On Error: Request goes through anyway. This is the safest default — you stay protected when guardrails work, but a provider outage won’t break your app.

- Audit: Request always goes through.

How can I monitor guardrail execution?

How can I monitor guardrail execution?

Go to AI Gateway → Monitor → Request Traces. You’ll see:

- Which guardrails ran on each hook

- Whether they passed or failed, and how long they took

- What they found (secrets, SQL issues, unsafe patterns, etc.)

- What mutations were applied

Do output guardrails work with streaming responses?

Do output guardrails work with streaming responses?

No. Output guardrails are skipped when the response is streamed (

"stream": true). Output guardrails need the complete response text to evaluate, but streaming sends tokens to the client as they are generated — so there is no full response to check before delivery begins.What you can do:- Set

"stream": falseif you need output guardrails to run. This ensures the gateway receives the full response, evaluates it against your output guardrails, and only then returns it to the client. - Handle checks client-side — assemble the full streamed response on the client and run your own output validation after the stream completes.

- Use input-only guardrails with streaming — input guardrails always run regardless of streaming mode, so you still get protection on the prompt side.